AI Crypto Scams: How Deepfakes and Voice Cloning Are Used

Why this matters

AI has made crypto scams faster, more targeted, and harder to detect. Impersonation fraud surged 1,400% in 2025 and AI-assisted operations extract 4.5 times more per scam than traditional ones. If your wallet address is linked to a public profile or your voice appears in any online video, you may already be a candidate for targeting.

Crypto scams reached $17 billion in 2025, with AI crypto scams driving the fastest-growing category. Impersonation scams alone surged 1,400% year-over-year, according to the Chainalysis 2026 Crypto Crime Report, as deepfake tools and voice cloning became accessible enough for large-scale fraud operations to deploy at speed. The shift matters: attackers no longer need a code exploit. They need a few seconds of your voice and a convincing profile.

How AI Changed the Fraud Playbook

Until recently, crypto fraud required either technical skill or a large, coordinated human team. AI has removed both barriers. Scam operations with links to AI tools now extract an average of $3.2 million per operation compared to $719,000 for traditional schemes, a 4.5x difference (Chainalysis, 2026). Generative AI activity tied to scam infrastructure grew 456% between May 2024 and April 2025 (TRM Labs, 2025).

About 60% of all deposits into scam wallets now come from operations that use AI tools (Chainalysis, 2026). This concentration means the risk is not evenly distributed: holders who are publicly active on social media, who have appeared in videos, or whose wallet addresses are linked to identifiable online profiles are disproportionately targeted.

Two capabilities explain most of this shift. First, voice cloning: tools can replicate tone, accent, and cadence from as little as three seconds of publicly available audio, enough to produce a convincing support call or family emergency scenario. Second, personalisation at scale: large language models let scammers craft thousands of individually tailored messages that reference a target's real transaction history, wallet address, or social media activity. The average scam payment rose 253% in 2025, from $782 to $2,764 (Chainalysis, 2026).

The Three AI-Assisted Scam Types Hitting Holders Right Now

Fake support and exchange impersonation. Scammers create near-identical handles on X, Telegram, and Discord, then reach out proactively, sometimes with cloned voice calls, claiming there is a security issue with your account. These build on classic phishing tactics now made far harder to detect with AI-generated voice and text. The goal is always the same: a seed phrase, a 2FA code, or a click on a link that installs credential-stealing software. No legitimate exchange or wallet provider initiates contact this way.

Deepfake celebrity endorsements. AI-generated video of public figures, most commonly prominent tech executives, is used to promote giveaway schemes where viewers are instructed to send crypto to receive a larger amount in return. These clips run as paid advertising on social platforms and in direct messages. The scam relies on the credibility of the face and voice being convincing enough that the viewer does not question the premise. No giveaway that requires you to send assets first is legitimate.

AI-generated expert networks. In January 2026, Check Point Research exposed an operation using 90 AI-generated personas acting as trading experts inside controlled Telegram and WhatsApp groups (Check Point Research, 2026). Victims were directed to install apps that displayed fabricated returns before withdrawals were blocked and wallets drained. The operation was indistinguishable from a real community until the exit.

These patterns connect to a broader shift covered in the Bybit hack breakdown: attackers are increasingly targeting people, not protocols.

What to Check in Your Own Setup

A few concrete steps reduce exposure to AI-assisted fraud. None require technical knowledge, but they do require pausing before acting on any unsolicited communication. The common thread across all AI scam types is urgency: pressure to act before you can verify.

- No legitimate service contacts you first. If a message arrives via DM, email, or call claiming urgency around your account, treat it as a probe. Go directly to the platform's official app or website using a URL you type yourself.

- Seed phrases and 2FA codes are never requested. Any situation where sharing them is framed as necessary is a scam.

- Voice calls can be fabricated. If someone claiming to be support, a family member, or a colleague asks you to act immediately, call them back on a known number rather than the one that contacted you.

- Check your own gaps. You can map your full setup at Asset Alert to identify platforms where you have no 2FA, weak recovery options, or known incidents, the same gaps attackers screen for when selecting targets.

Frequently asked questions

See your health score in under five minutes.

No wallet connection. No account needed to start. Just map your setup and get a clear picture of where the gaps are.

Related Articles

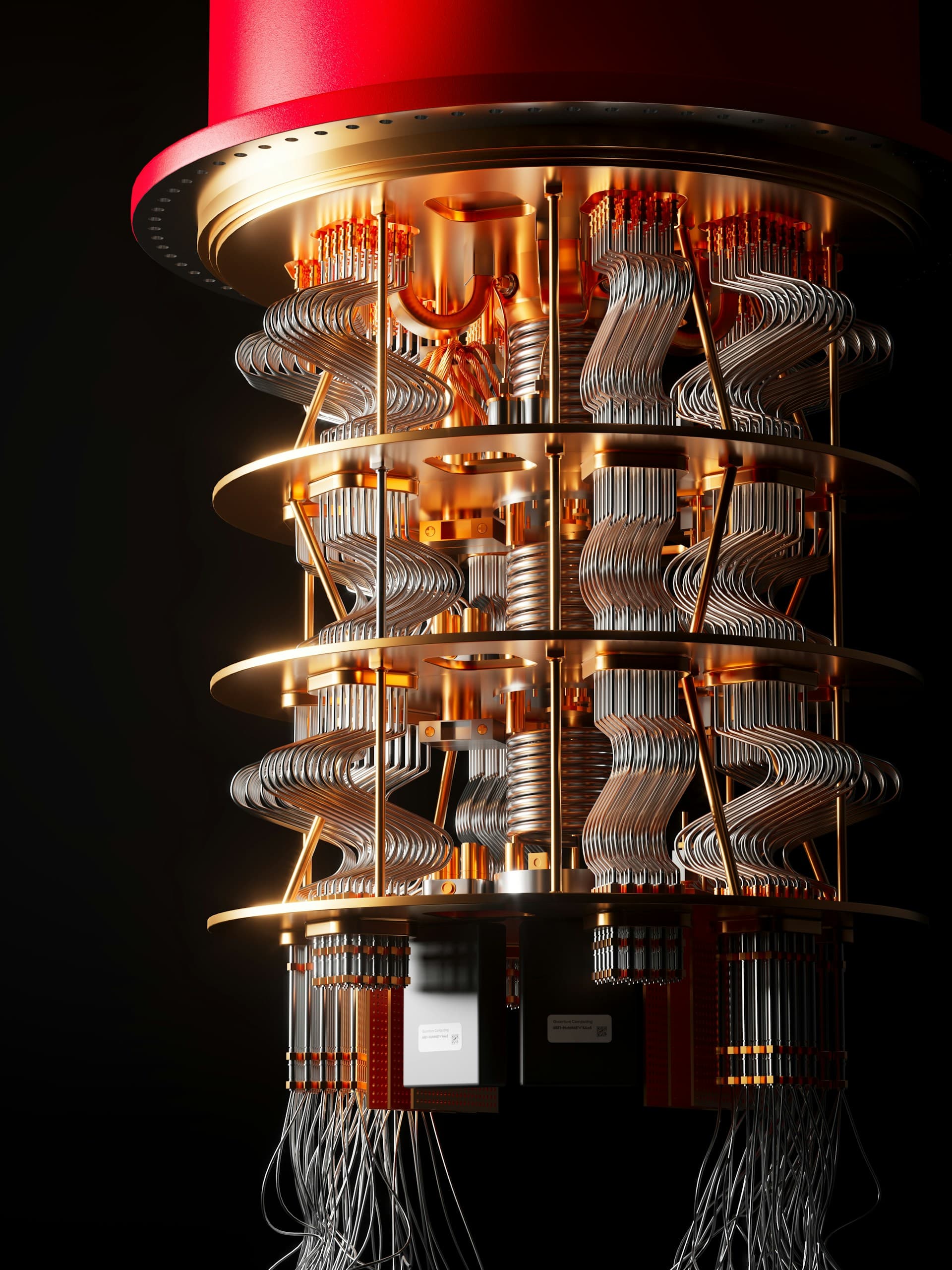

Quantum Computing and Crypto: What Holders Need to Know

How quantum computing threatens Bitcoin and Ethereum security, which addresses are at risk today, and what major networks are doing about it.

Hardware Wallet Blind Signing: The Risk Behind the Button

Blind signing lets attackers drain your assets with a single approval — no seed phrase required. Here's what it means and what to check in your setup.

Crypto Phishing Scams: How They Work and How to Avoid Them

Crypto phishing scams cost holders $17 billion in 2025. Learn how impersonation attacks, wallet drainers, and address poisoning work — and what to do.